What should our app memory footprint be? How fast is our app rendering in the latest release candidate? How quickly will our app drain the user’s battery in the next release?

When testing app performance, common questions like these are difficult to answer right away. Usually, the correct answer is, “It depends.” Every team should consider not only the questions above but also the app’s functionality and the type of mobile devices (brand/model/OS) used during testing.

At Apptim, we want to help you answer these questions early on during development and testing. We provide you with a way to define your own thresholds for the most important mobile experience KPIs in your app.

Your first test with Apptim will use default thresholds based on Google and Apple’s best practices (Android/iOS), plus other market benchmarks that consider device fragmentation.

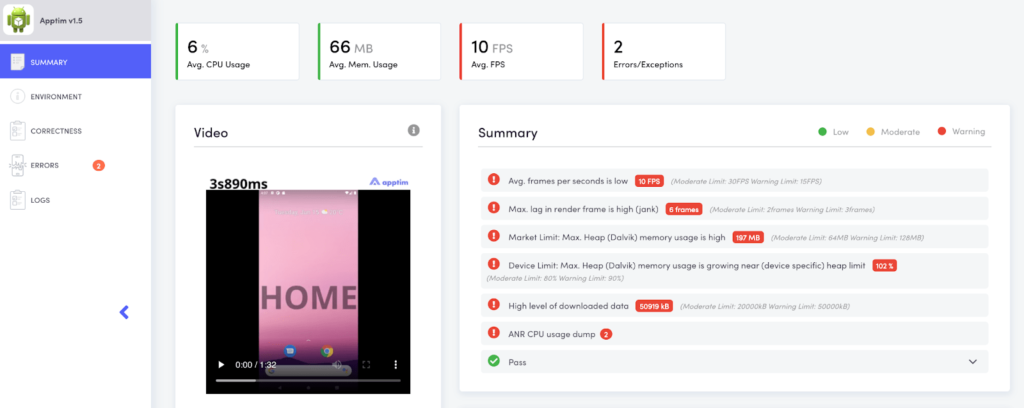

In the main Dashboard and Summary section, each metric is evaluated against default thresholds set in Apptim. Each metric will have a green, yellow, or red mark according to its comparison result against the default threshold. The yellow and red marks are called the Moderate and Warning Limits.

- Moderate Limit: When the metric value is over a “recommended” threshold that might cause issues in some devices. 🟡

- Warning Limit: When the metric value is over an “acceptable” threshold and is likely to cause issues on some devices. 🔴

Note: Check out what the current default thresholds are for Android and iOS apps.

After you run a few tests, you’ll probably want to set your own thresholds for your specific app and devices used (see Benefits section below). Consider that you’ll need different thresholds per device type (at the very least). You should be using devices that strongly differ in hardware (e.g., low-end vs high-end devices) to evaluate how your app behaves in a wide range of devices sold in the market.

How can you set your own thresholds?

Mobile performance thresholds are set inside your Apptim Cloud account in a specific workspace and app. This way, the thresholds can be accessed and used by different teammates when running tests inside the same workspace. The only prerequisite to defining your thresholds is to have at least one report published in a cloud workspace.

Once a report is published, you need to:

- Log in to your Apptim Cloud account.

- Navigate to a workspace/app.

- Click on the SET UP THRESHOLDS FOR YOUR APP button.

- Write a name for your threshold file, like “Low-End Devices”.

- Define the performance metrics and thresholds for each using specific keys (see Keys).

- Save the thresholds’ content.

This will automatically create a .yml file with a key for each performance metric and a moderate and warning threshold. For example:

#CPU

cpu_avg:

moderate:

operator: ">"

value: 20

warning:

operator: ">"

value: 40The above key means that if the average CPU consumption measured during the test exceeds 20%, a moderate limit alert (yellow mark 🟡) will be shown in the report or CLI output. If the average exceeds 40%, a warning limit alert (red mark 🔴) will be shown instead.

Benefits of using custom mobile performance thresholds

When running mobile performance tests from a CI/CD pipeline, the dev team will be alerted early on when critical thresholds are passed. They can then act in time to fix it before a new app version is released.

Once you run a few tests with Apptim, you’ll have enough data showing how your app performs on a specific device or set of devices. Then you can set up a baseline with your own thresholds per device using your initial test results. This will also help you to identify performance regressions in future versions of your app before it reaches the end-user.

Leave a Reply